Today’s focus is on the topic of address and data quality from Address Solutions.

How good is my database? How can I measure the data quality? What problems do I find in the data? What impact does this have on the processes? What action do I need to take? What do I have to do? In what order does the processing take place? How can I establish a permanent process? How can Address Solutions help me in my company?

These are the typical questions that come to everyone’s mind on this topic. This field report provides the answers.

Below you will find our most important findings from an exciting conversation with Jörg Kleinbrahm and Ralf Geerken. Both are managing directors at Address Solutions.

- AS Inspect is used for problem detection and handling

- Structure of the test steps

- Duplicate check

- The role of AI

- Our conclusion

AS Inspect is used for problem detection and handling

.

The aim of the application is:

With little effort, the user should be able to get an overview of the data quality. The system recognizes what content is contained in the fields through the Address Solutions rule set and names the uniquely recognized fields accordingly. Fields that are not recognized are simply numbered consecutively. The user names these fields with the appropriate label. If AS Inspect does not recognize the fields correctly, one can then map them manually in a simple way. The mapping of criteria labels can be customized. Incorrectly recognized fields can be easily renamed.

The advantages of good field recognition and individually possible naming can be seen in an exemplary look at the structure of a contact address:

.

For the following presentation of the product, a test dataset of about 25,000 records has been prepared as a CSV file. One is guided through the test process in a few steps.

The processing time at Address Solutions is very fast

.

25,000 records are analyzed after 2 to 3 minutes, for 100,000 records this would be about 10 minutes runtime to see first results. So everything at a fast pace.

Questions for the Address Solutions team:

-

- Can you create a variable set in advance for the import, which can then be used again and again?

2. Does one already recognize anomalies during the import? For example, a wrong order of the birthday date?

The answers from the experts at Address Solutions are:

- Of course, you can create as many variable sets as you want and save them under a meaningful file name in any directory!

- During import, the data is taken absolutely 1:1, and then anomalies are found in the data afterwards. We do not want to exclude any data already during import and lose it for later analysis!

What are the testing steps in detail?

For the first insight, 3 checks take place: Address, name and duplicate check.

The check run generates – as already mentioned in a very short time – after about 2 minutes (with about 25,000 records) three standard reports: these clearly answer the following problem question:

- Are there any problems in the dataset? If so, what are they?

In addition to the three reports, you also get a fill level analysis: which fields are filled at all? Regardless of the quality of the content. With a traffic light, the user gets a direct indication for a quick assessment of how good or less good the condition of the data.

Now it goes into the details

.

For each inspection process with “AS Inspect”, the system shows one, in addition to another traffic light with explanations, the problem and weak points. The user gets categorized hints within 9 error categories:

- Completely correct, 2. norm structuring, 3. structure corrected, 4. unknown/wrong salutation corrected, 5. unknown title, 6. unknown first name (see foreign first names), 7. unknown last name, 8. several fields with invalid content, 9. person community.

.

The system then immediately suggests the possible corrections such as swapped first and last name, first and last name in one field, incorrect capitalization and some more.

Further optimization possibilities are for example the salutation (based on the first names the correct salutation is defined). Special cases like Andrea, if it is the male or female first name as in Italian, but the female first name in German, are not corrected, but recognized and shown for manual correction. Unknown first names or surnames (which contain digits, for example) are highlighted.

In most cases it is also useful to split up groups of persons: e.g. Herbert and Astrid are in one first name field. From this – if desired – two data sets are created. Even up to 3-4 names can be separated in this way.

Behind each analysis there is the report on the screen or as a detailed PDF.

Analysis of the correct address

.

For each record there is a separate score value. For example, a score for the ZIP code, one for the street name, and one for the city name. The result is the total score for all three values as well as an additional status code that gives supplementary hints about anomalies.

Further hints are e.g. zip code, street or city could be corrected automatically, hints for zip code and street or zip code, street and house number do not match. Or there is an unexpected foreign address in the data. The city part name, which is in the City field, was replaced by the correct city name.

Each record gets a value for a ranking. When a certain value is reached, the customer can immediately re-enter this corrected record into the system. The higher the value/rank, the more manual editing has to be done.

Result

.

After completing this work, one has an excellent starting point for duplicate matching.

The goal here is to leave as little manual work as possible to the address quality team.

Let’s move on to the duplicate check

.

At this point, if you have a high value of duplicates in your organization, cleaning them up can quickly become a bit of a Herculean task. But this work is still worthwhile and in any case.

What is the purpose behind the merging? Here it is necessary to apply sharp or soft criteria for evaluation. Therefore, attention must be paid to overkill and underkill.

Additional variables, such as e-mail address, age or birthday or purchase data help to decide who is a duplicate and who is not. Who is a header and who is a follower doublet?

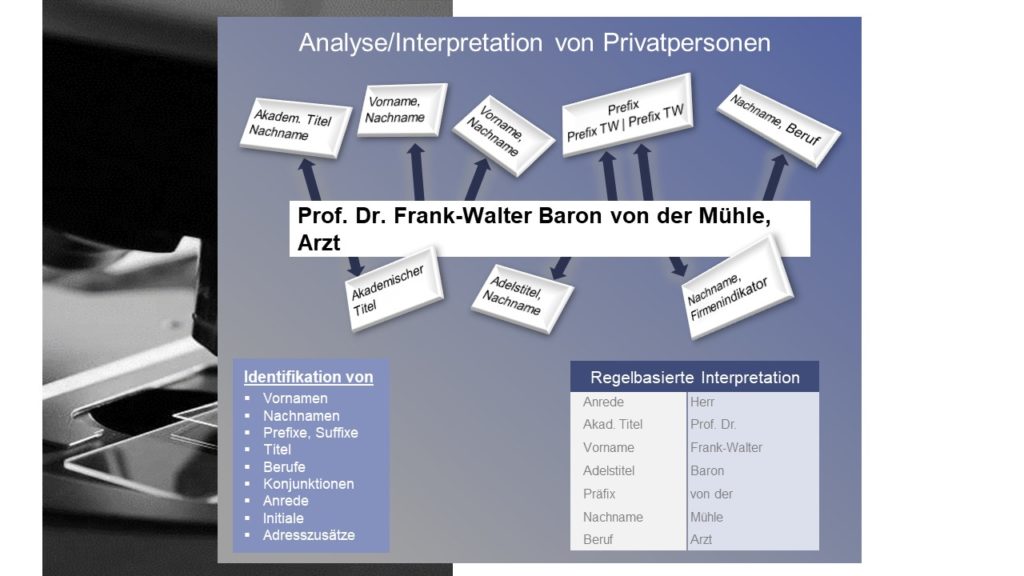

Resolving name components

.

The combination of a) knowledge-based and b) rule-based interpretation helps to cleanly resolve even complex word concatenations. Example Prof. Dr. Frank-Walter Baron von der Mühle, physician.

With the help of the European knowledge database, Address Solutions uses its experience to process and resolve the name details as automatically as possible. The example for SAL Auto-Leasing also shows well how it works for organizations.

A highlight is the variety of comparison methods at Address Solutions

.

This allows an organization to compare within a field or between fields to find duplicates or similar. E.g. Ursula and Uschi, or in English the first names Richard or Dick. All possible spelling variants are “washed out” in very fine filters.

There is no standard. But there are a lot of very useful templates, which can be applied to any data situation. For example, sharp or soft matches for pure private person data sets, pure company data sets or mixed data sets. These configuration templates can be loaded in the simplest way and, if really necessary, further optimized or refined.

The different mathematical methods available all have advantages and disadvantages. Therefore, they are selected by the Address Solutions team very specifically to the customer’s particular problem and used with maximum benefit.

A note on quality assurance is important to us

.

There are comparison methods that are based on statistical procedures completely individually adapted to the content of the fields to be compared, e.g. customized comparison methods for first names, last names, postal codes, city, street, house no., date of birth, telephone number, mail addresses, etc.

This means that a customer does not have to choose the best method from several hundred different methods. Here, the Address Solutions team supports the customer with these context-oriented comparison methods. These are formed from several suitable mathematical algorithms.

.

The goal: to obtain the most granular partial results possible, which are then used to apply customer-specific rules. Address Solutions supports with procedures that the customer starts with. Then Address Solutions applies more refined configurations or methods. And the customer is closely supported in the application by the Address Solutions consulting team.

From the various part scores, every conceivable set of rules for the detection of duplicates can be derived.

In sum, various rules, which are combined in a decision matrix, are the basis for the corresponding online search or duplicate matching.

The determination of the comparison values and decision rules is primarily only for the purpose of merging data records into duplicate groups. That is, group suggestions are generated for subsequent mergers.

Additional features

.

Through the set of rules, searches can be performed for specific groups of people, for example, searches can be performed for fraudsters or married women. Or different legal forms can be used to represent a holding.

The user/customer gets help which rule has taken effect in which case. So the customer can understand in detail what happened.

Approx. 20 to 30 rules summarized result in a set of business rules (the so-called decision matrix), which can be used at the customer for duplicate detection.

As a summary you can see all rules acting on this duplicate are displayed. Excellent transparency!

The question about AI puts a smile on the face of the Address Solutions – Team

.

“For years now, we have been using analysis methods, which today are being driven through the village as a fashion trend under AI. The use and combination of extraordinary methods and processes has always driven us. Since the founding of the company, we have been dealing with it”, Ralf Geerken smiles into the video camera.

“We originally started with the consulting offer. Only we did not find a suitable software that met our requirements. So we have now developed it ourselves. Whereby with us before each comparison of two data is analyzed first of all exactly what is in the respective field. In this way, we try to exactly simulate the human procedure, for example, when comparing two names. Only at a much higher speed than humans can, and hopefully with significantly fewer errors.”, Jörg Kleinbrahm adds to his colleague.

On simple or complex examples, the Address Solutions team proves your experience:

.

Another proof of the pronounced attention to detail is the story: “We have an incredibly large wealth of experience in possible sources of error. E.g. When entering a postal code, the first 3 digits are often written correctly. The digits 4 and 5 are often entered incorrectly.”

A second example: When comparing house number, the possible street side is also taken into account: House number “1” and house number “2” get a slightly lower score than “3” with “1”. Or areas with high similarity score like “house number is between “6-10” compared with the score “8” are taken into account.

The result:

Maximum flexible options. Address Solutions’ various tools are most fun when millions of records are involved.

With AS Inspect, you get a robust quality statement based on a small, meaningful amount of data. You don’t have to analyze several million. About 25,000 data are sufficient to check a certain postal code area. If the duplicate cleaning is then carried out with the duplicate software, the quality statement is of course still correct!

In which program does the user process the sorted out duplicates?

There is a pool of solutions for processing at Address Solutions, which can be quickly adapted to the specific wishes of the customer.

The creation of the Golden Record must also be specially developed in each case. This is developed from the customer-specific basic tools, which is also supported by a variety of templates from the above-mentioned consulting pool.

Our opinion on this:

Great, because with standard tools often more is broken than good is achieved. As costly as this may seem, the quality is high in the end. With further processing based on the so-called templates, the software can completely align itself with the specifications and ideas of the specialist department. The latter does not have to accept any compromises in quality due to the software.

For which target groups is the product suitable?

Users in companies who are responsible for address quality, MarTech and CRM topics.

Company target group:

Generally 250,000 or more records.

But there are also examples of customers with about 50,000 customer records, for whom the maintenance of their valuable data stock is important. That is why the Address Solutions tools are used here.

There are about 20 large customers who use the Address Solutions solutions within an SAP system through the SAP certified partner. These applications have between 10,000 and 100,000 records to manage.

Our conclusion

.

The fewer manual decisions employees have to make, the better. So it is already significant whether you sort out 1% more or less for manual processing. Therefore, it is absolutely right to put the emphasis on quality of methods.

AI methods have been used and refined at Address Solutions for many years. Millions of data are matched from tens of different systems.

Every customer wants the possibility of duplicate processing individually on their screen through customized solutions – and gets this delivered perfectly.

For each variable and each possible occurrence there is a set of rules as a basis, which can be adjusted in itself once again to the respective needs and specifics of the company.

Address Solutions does not only want to provide a software, but Address Solutions consults individually on the basis of the analyses. This topic is always to be handled individually for each customer. A very good approach from the team of AS Address Solutions GmbH!

What are the references?

For an overview of Address Solutions’ references, please feel free to contact:

Jörg Kleinbrahm

AS Address Solutions GmbH

Emperor place 6

52222 Stolberg/Rhineland

Tel. : 0 24 02 / 76 49 19

Fax : 0 24 02 / 76 49 16

Mobile : 01 73 / 73 250 93

E-mail: J.Kleinbrahm@Address-Solutions.de